Google's Genie 3 Isn't a Gaming Toy—It's the Foundation for Embodied AGI

How world models are becoming the next frontier after large language models, and why the vision of superhuman AI is closer than ever.

On January 29, 2026, Google DeepMind quietly released something that might matter more than any AI announcement this year. Not because of what it does today—but because of what it reveals about tomorrow.

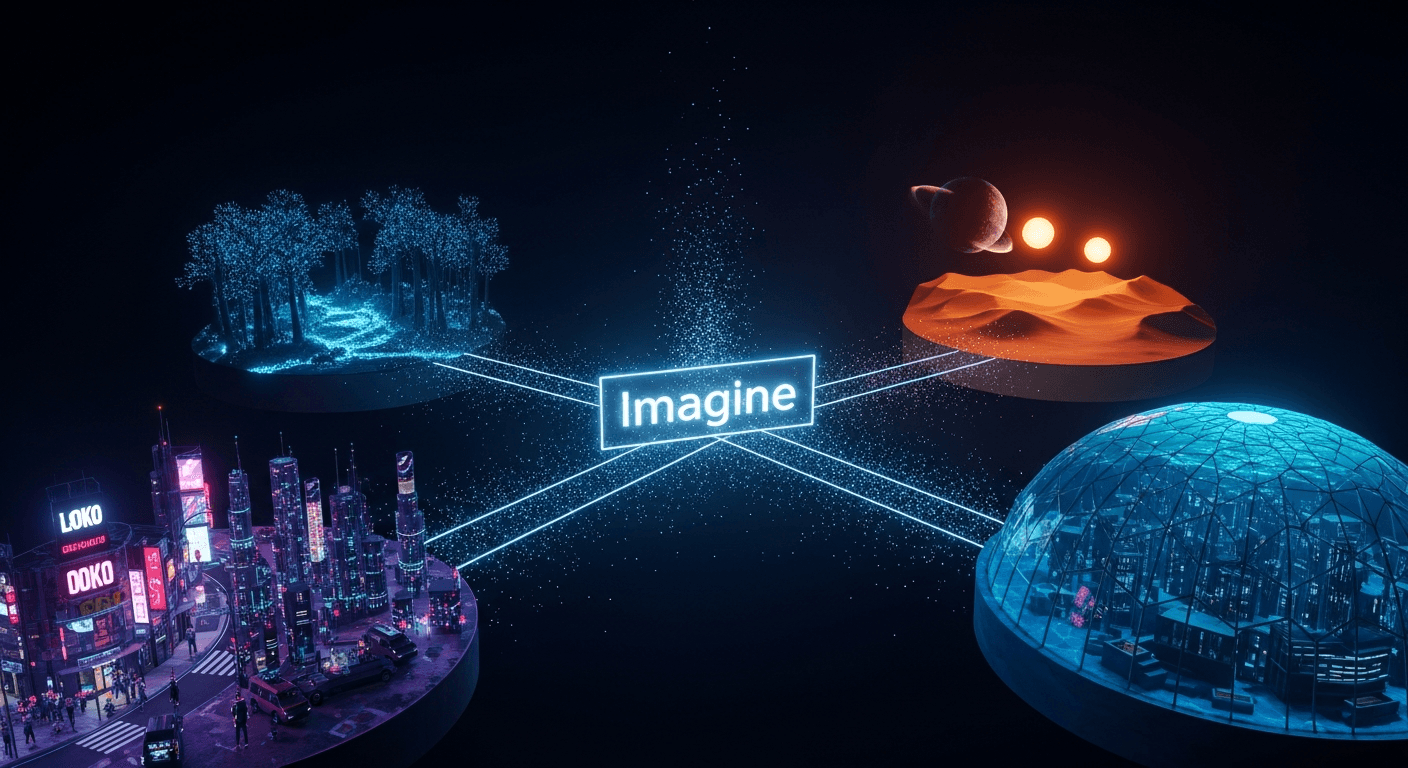

Genie 3 generates interactive 3D worlds from text prompts. Type "a forest with bioluminescent mushrooms at twilight" and within three seconds, you're exploring it. Navigate in real-time at 720p and 24 frames per second. Turn around, and the world you just passed through is still there—consistent, coherent, remembered.

The immediate reaction was predictable: cool tech demo, maybe useful for games, limited by the 60-second session cap. Most coverage focused on the $250/month Google AI Ultra subscription required for access.

But that framing misses the point entirely.

The GPT-2 Moment for 3D

Developer @founderengineer captured it best on Twitter: "Google's Genie 3 is a GPT-2 level release. It is clear that when scaled up, video games will be vibe-designed."

Remember GPT-2 in 2019? It generated coherent text for a few paragraphs before degrading into nonsense. Impressive for researchers. Dismissed by skeptics. "Cute demo, not practical."

Within five years, GPT-4 produced coherent documents with complex reasoning, powered applications across every industry, and became infrastructure that millions build upon daily.

Genie 3 generates coherent worlds for about 60 seconds before consistency degrades. Impressive for early users. Dismissed by skeptics focused on limitations.

If you see the pattern, you see the future.

Not a Product—Infrastructure

Here's what the consumer-focused coverage misses: Genie 3 isn't really a product launch. It's a capability demonstration wrapped in a research prototype.

The tell is the SIMA 2 integration. SIMA (Scalable Instructable Multiworld Agent) is DeepMind's generalist AI agent for 3D environments. The latest version doesn't just follow instructions—it reasons about them, powered by Gemini's language capabilities.

The magic happens when you combine them:

- Genie 3 generates a world the agent has never seen

- SIMA 2 navigates the world and attempts tasks

- Gemini scores the attempts and generates new tasks

- SIMA improves from its own failures

- Genie generates new worlds for continued learning

DeepMind calls this an "endless virtual training dojo." No human demonstration data required. The agent teaches itself through trial and error in procedurally generated environments.

This isn't a gaming feature. This is how you scale embodied AGI.

The Robotics Revolution Hidden in Plain Sight

Training robots in the real world is expensive, dangerous, and slow. You can't crash a million delivery drones to train the million-and-first.

But you can generate a million warehouse variations in simulation. A million outdoor scenarios. A million edge cases that would take decades to encounter naturally.

World models solve the data bottleneck for embodied AI. Genie 3 generates environments with behavioral dynamics beyond physics—weather patterns, agent behaviors, emergent interactions. The training signal is richer than hand-coded simulations could ever provide.

The consumer prototype generating fantasy worlds? That's the interface that funds the research. Every user interaction generates training data. The $250/month subscription subsidizes the infrastructure that will train tomorrow's robots.

The Gaming Industry's Existential Question

The Game Developers Conference 2025 survey found that 52% of gaming professionals now believe AI negatively impacts the industry—up from 30% just one year prior. Visual artists, level designers, and environment artists expressed the strongest concerns.

They're not wrong to worry. Genie 3 enables "zero-code prototyping." Type a level description. Explore it seconds later. Iterate through conversation. What took a team weeks takes an individual minutes.

But the disruption narrative oversimplifies.

The more accurate framing: roles evolve from execution to direction. From creating assets to curating AI-generated assets. From building worlds to guiding AI world-building.

The skill shifts from "can you model this environment?" to "can you describe what you want and recognize when AI delivers it?" Creative vision becomes more valuable. Pure execution becomes commoditized.

Those who learn to direct AI systems rather than compete with them will capture disproportionate value. This has been true for every automation wave. This one is no different.

The Augmi Connection

The mission of turning humans into superhumans through AI agents that build, analyze, visualize, and execute—all through conversation—is validated by Genie 3.

The core thesis: AI that produces visible, navigable output creates fundamentally different user relationships than black-box systems.

The promise—"Voice In → AI Agents Execute → Visual Clarity Out"—describes exactly what Genie 3 demonstrates for 3D environments. Speak what you want. Agents build it. See and explore the result.

The pattern applies beyond gaming:

- Code becomes architecture you walk through

- Data becomes landscapes you navigate

- Processes become environments you observe

Visual comprehension isn't a feature. It's the interface paradigm that makes AI-generated complexity manageable.

What This Means for Builders

If you're a developer: Learn world model concepts now. The interface patterns are more predictable than the specific technology. Text-to-3D pipelines, spatial computing frameworks, agent orchestration—these skills transfer regardless of which model wins.

If you're building a startup: Infrastructure opportunities abound. Tools bridging world models to applications. Vertical solutions for training simulation, architectural visualization, education. Voice-to-world interfaces that make 3D creation as accessible as text generation.

If you're in gaming: The prototyping transformation is coming whether you're ready or not. AI-generated first drafts become standard. Your competitive advantage shifts to taste, curation, and the creative direction AI can't replicate.

If you're thinking about the future: The convergence is clear. Voice input meets world model generation meets spatial computing display. This is the next computing paradigm—not a feature within existing paradigms.

The Window Is Open

World models are the next modality after large language models. The 2-3 year window to develop expertise is open now.

Companies that built on transformer architecture assumptions in 2020-2022 captured disproportionate value as LLMs scaled. The same opportunity exists for world models.

The constraints are temporary:

- 60-second sessions will extend to hours

- 720p resolution will become photorealistic

- Single-agent experiences will become multi-user worlds

- Research previews will become public APIs

The direction is permanent. Digital environments generated through conversation, explored spatially, populated by AI agents that learn and adapt.

The code of the future won't just be read. It will be walked through.

And for those building tools that make humans superhuman? The foundation just got a lot more solid.

Written by

Global Builders Club

Global Builders Club

If you found this content valuable, consider donating with crypto.

Suggested Donation: $5-15

Donation Wallet:

0xEc8d88...6EBdF8Accepts:

Supported Chains:

Your support helps Global Builders Club continue creating valuable content and events for the community.