Sam Altman's AI Combinator: Why the Best AI Won't Execute Your Ideas—It'll Make Them Better

The OpenAI CEO's vision for AI that improves human thinking, not just human productivity. Why 5% accuracy is transformative for ideation.

Sam Altman's AI Combinator: Why the Best AI Won't Execute Your Ideas—It'll Make Them Better

The OpenAI CEO's vision for AI that improves human thinking, not just human productivity

Every AI application I've ever built focused on the same thing: execution. Can AI write this code? Can AI draft this email? Can AI research this topic?

Then Sam Altman said something in a recent town hall that reframed everything:

"We think at the limits of our tools. And I think making a tool that helps people think better, have better ideas—it feels like it's gotta be incredibly empowering."

This isn't about AI executing tasks. It's about AI improving the quality of human thinking itself.

The "Human Slop" Problem

While everyone debates AI-generated slop flooding the internet, Altman points to a bigger problem: human slop.

Human slop is:

- Mediocre ideas that waste months of effort

- Derivative thinking that follows conventional wisdom

- Projects that never should have been started

- Confirmation bias that prevents breakthrough insights

AI slop is annoying. Human slop is expensive.

Think about it: How many startup ideas fail not because of execution, but because the idea was flawed from day one? How many products ship features nobody wanted? How many projects consume resources pursuing the wrong goal?

The constraint isn't execution. It's ideation.

The 5% Principle

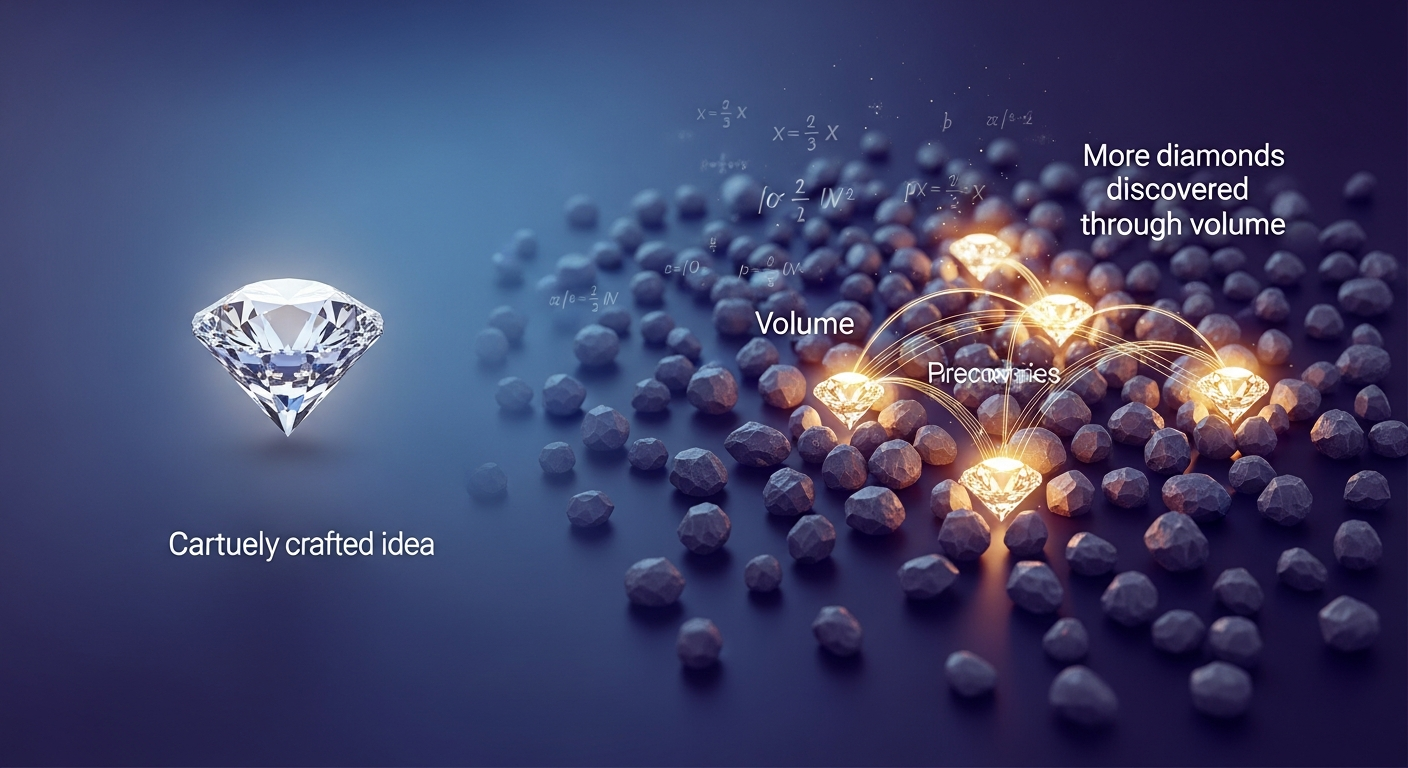

Here's the counterintuitive insight from AINews editor swyx: for idea generation, even 5% useful output is transformative.

Wait, what? In almost every AI application, 5% accuracy would be useless. 95% code that compiles? Garbage. 90% correct legal advice? Malpractice.

But for brainstorming, the math inverts:

- The search space for good ideas is vast

- Human time spent on bad ideas is the real cost

- One breakthrough insight justifies hundreds of misses

If AI generates 1,000 ideas and 50 are worth considering, that's transformative. A human might generate 10 ideas in the same time, find zero worth pursuing.

Design implication: AI optimized for ideation should maximize diversity and surprise, not accuracy and precision.

The "AI Paul Graham" Challenge

swyx asked an interesting question: What would it take to build an "AI Paul Graham"—an AI that tells you your idea sucks, brainstorms with you, or shares the insight that flips your perspective?

The obvious approaches don't work:

- RAG on his essays retrieves conclusions, not reasoning

- Finetuning on style captures voice without wisdom

- Simple prompting produces shallow imitation

The real challenge is encoding tacit knowledge—what PG knows intuitively but has never articulated. When to apply which principle. How to recognize patterns that signal future problems.

This isn't retrieval. It's judgment.

The Validation: GPT-5.2's Scientific Contributions

This isn't theoretical. GPT-5.2 is already demonstrating the thought partner pattern:

- Math: Provided the key insight for an Erdős number theory proof (verified by mathematicians Sawhney and Sellke)

- Biology: Identified a mechanism from unpublished data that led to experimental confirmation

- Benchmarks: 92% on GPQA (doctoral-level science) vs 39% for GPT-4

OpenAI's Kevin Weil calls it a "digital brainstorming partner for researchers."

Sam's timeline: "Small discoveries were going to start in 2026. They started in 2025, in late 2025."

What This Means for Builders

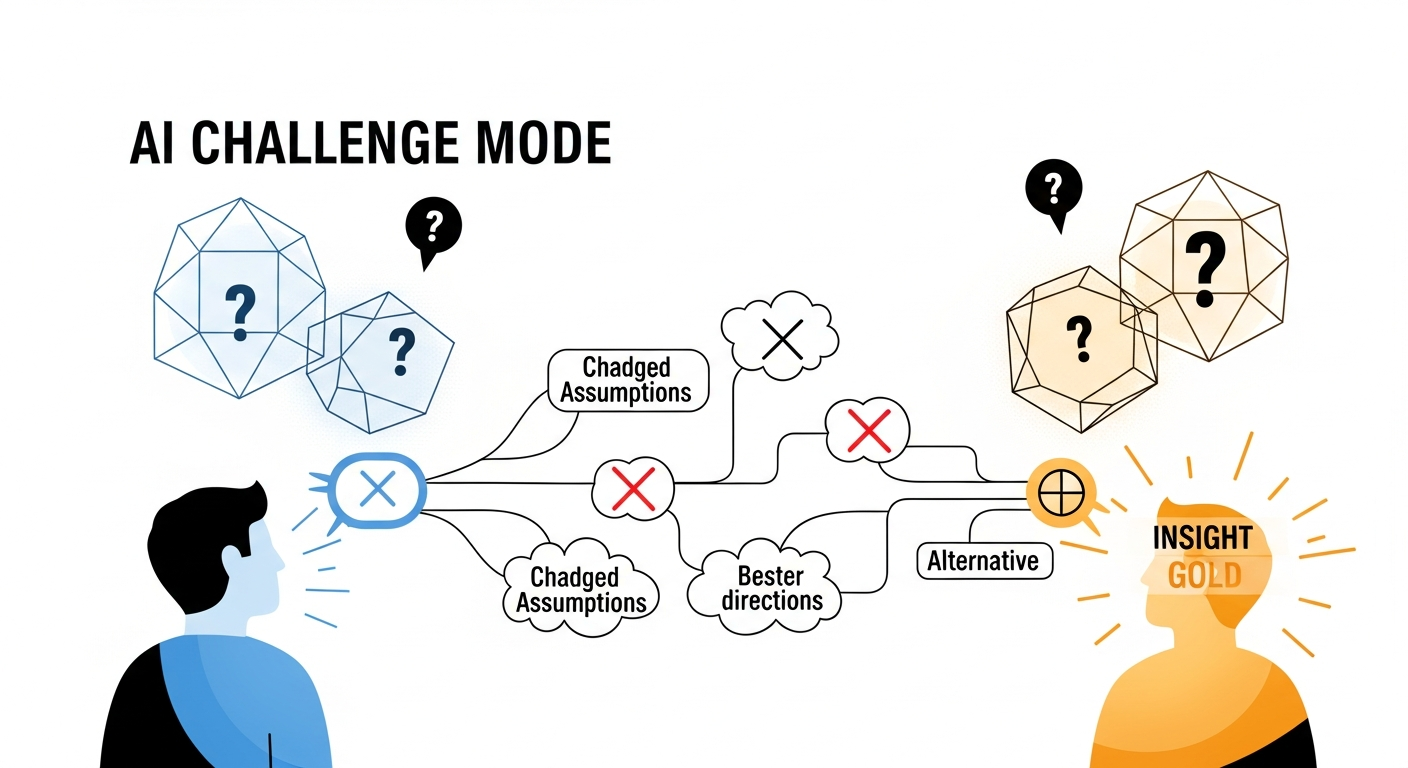

1. Build for Challenge, Not Compliance

The most valuable thought partner disagrees productively. It finds the flaw in your logic. It suggests the approach you dismissed too quickly. It asks the question you forgot to consider.

This requires different design:

- Challenge mode: Devil's advocate prompting

- Ideation mode: High-temperature sampling, diverse seeds

- Validation mode: Structured assumption testing

- Synthesis mode: Cross-domain connection

2. Accept Higher Miss Rates

Optimization for ideation means:

- More attempts > fewer perfect outputs

- Broad generation > narrow optimization

- Serendipity enablement > precision targeting

If your AI agrees with everything you say and never surprises you, it's not thinking with you—it's echoing you.

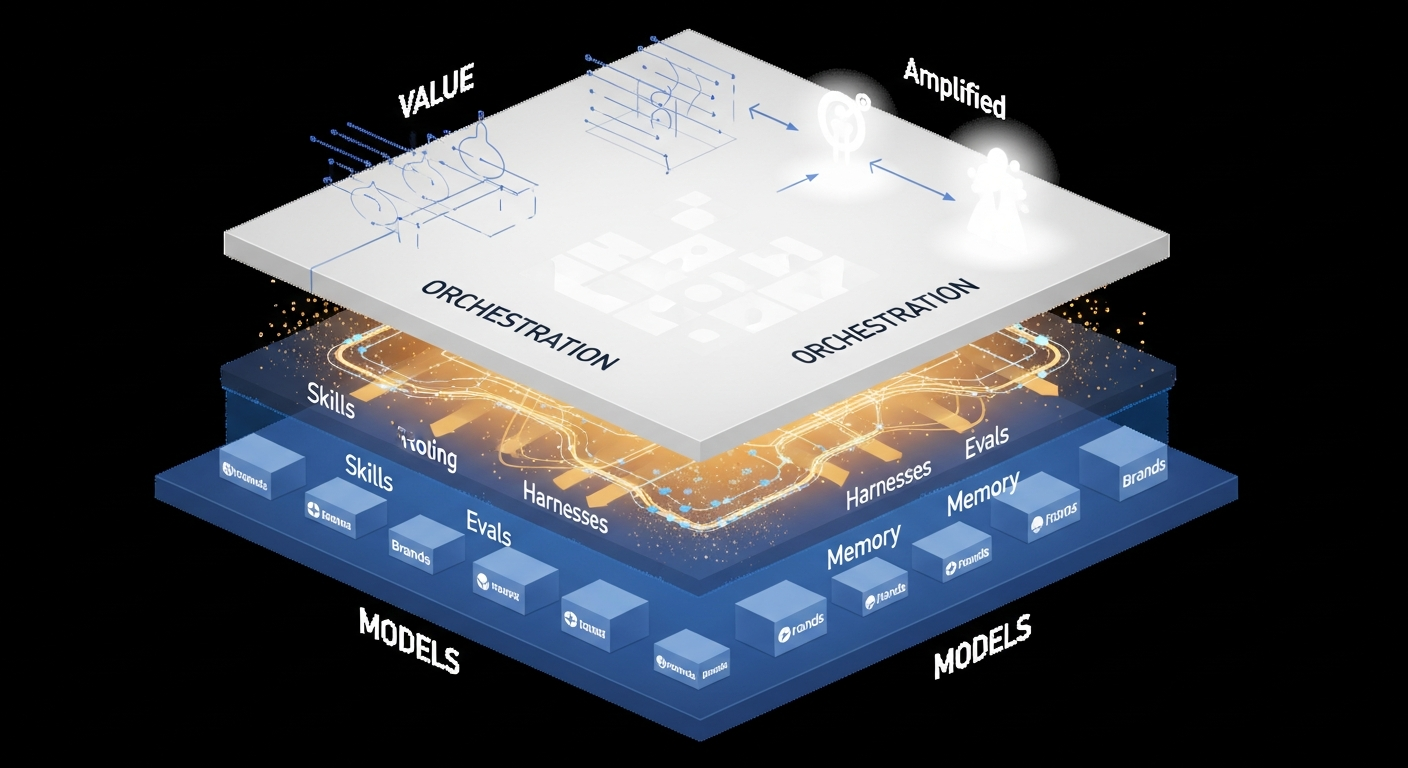

3. Invest in Orchestration

The capability convergence is real. Kimi K2.5 matches GPT-4.5 on coding at 5x lower cost. Open models approach frontier capability.

The moat isn't the model. It's the system around it:

- Skills: Reusable capability modules

- Harnesses: Orchestration patterns

- Evals: Measurement beyond benchmarks

- Memory: Persistent context

LangChain's survey of 1,300+ professionals: 89% have observability. Only 52% have evals. The gap isn't capability—it's architecture.

The Paradigm Shift

Sam's prediction: "Idea guys who have ideas and look for teams to build them are about to have their day in the sun."

If AI can execute, the bottleneck becomes ideas worth executing.

The transformation:

- From: What can AI do?

- To: How can AI make me think better?

The builders who understand this—who create AI that challenges thinking, generates possibilities, and finds the unexpected connection—will capture the highest-value applications of this era.

Your AI shouldn't just execute your ideas. It should make them better.

Key Takeaways

-

The highest-value AI applications may be improving human thinking quality, not executing tasks.

-

For ideation, 5% useful output is transformative—the search space is vast and one good idea justifies hundreds of misses.

-

Build for challenge, not compliance. The most valuable thought partner disagrees productively.

-

Invest in orchestration. As models commoditize, differentiation shifts to Skills + Harnesses + Evals.

-

The paradigm shift: From "What can AI do?" to "How can AI make me think better?"

Sources: OpenAI Town Hall (January 2026), AINews Newsletter (swyx), OpenAI Science publications, LangChain State of Agent Engineering, NeuroLeadership Institute

Written by

Global Builders Club

Global Builders Club

If you found this content valuable, consider donating with crypto.

Suggested Donation: $5-15

Donation Wallet:

0xEc8d88...6EBdF8Accepts:

Supported Chains:

Your support helps Global Builders Club continue creating valuable content and events for the community.